OmniSense v2: A Human-Skin-Inspired Visuotactile Sensor for Unified Tactile Imaging

Human skin, the largest organ of the human body, is a naturally integrated complex sensing platform. It is inherently multimodal, capable of simultaneously sensing various stimuli, including shape, texture, temperature, and other fine details, through specialized receptors. With just a single touch, it can detect differences among various physical objects and their material properties. This multimodal capability plays a significant role in humans' ability to reliably feel, understand, and manipulate objects in unstructured environments.

It's an engineering challenge to replicate the sensing capabilities of skin. Many tactile and visuotactile sensors are currently optimized for specific sensing targets, such as high-resolution surface imaging, force estimation, slip detection, or thermal feedback. Typically, achieving different functions requires different materials, configurations, or mechanical structures to switch between them, which poses a practical limitation. A unified approach that can capture multiple tactile cues from a single compact sensor without reconfiguration remains a key gap.

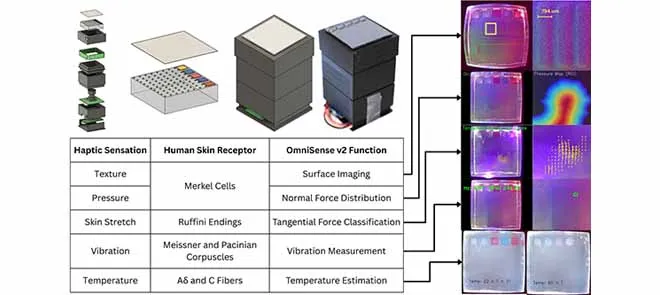

OmniSense v2 addresses this gap by engineering a unified visuotactile sensor that senses multiple tactile cues through a single architecture. By developing a comprehensive sensing skin, controlled illumination, and camera-based processing, the system captures multiple visual cues into a single deformable skin. It selectively emphasizes these cues through illumination and vision processing techniques, enabling multimodal sensing without requiring physical reconfiguration.

The sensor features a compact, modular structure that integrates a camera with programmable illumination and a soft sensing interface. The sensing skin is made from gelatin-based material and embedded with distinct functional layers of pigments: ultraviolet (UV) fluorescent pigments for dot tracking, thermochromic pigments for thermal sensing, and a Lambertian coating for textures. The optical subsystem uses controlled illumination to highlight different signals from the same contact area. The modular housing design supports repeatable geometry for optimization and facilitates practical iterations.

OmniSense v2 uses computer vision techniques to convert captured tactile frames into modality-specific outputs. The system generates a surface imaging stream for visualising texture and contact, while extracted contact regions are used to estimate normal interactions. Tangential force interactions are identified by tracking deformation-related cues through UV-activated dots. Vibration is inferred from temporal variations in contact signals to estimate frequency. Temperature-states are revealed by monitoring color changes in thermochromic pigments. A key design choice involves time-multiplexed sensing, where illumination and processing steps are cycled so that multiple sensing modalities can be derived from the same sensor in rapid succession, enabling near-simultaneous multimodal readout.

OmniSense v2 demonstrates that a single, compact visuotactile sensor can provide multiple tactile modalities without requiring hardware reconfiguration. Experiments show its ability to capture fine surface-details exceeding human skin, excel at recognizing tangential interactions, and reliably detect temperature-state.

Beyond these performance metrics, the main contribution of this technology lies in its practical approach for unifying tactile modalities through a single engineered skin and an illumination/vision system. This aims to create a more accessible and scalable multimodal tactile sensor, with the potential to replicate the sensitivity of human touch. These advancements could benefit fields such as robotics, smart devices, healthcare, manufacturing, and wearable technologies.