Evaluating Event-Based Vision Sensing in Rain and Fog

Adverse weather conditions can severely impact the performance of advanced driver assistance systems (ADAS). However, since autonomous vehicles are safety-critical systems, their performance should remain robust and reliable across all domains and scenarios. Various sensors, including event cameras, are being considered for automotive perception to achieve strong and reliable sensing.

Conventional frame-based cameras record full images at regular intervals. In contrast, event camera pixels update independently when they detect a change in light intensity, enabling fully asynchronous capture of light-intensity variations in a scene. As a result, event-based sensors have microsecond temporal resolution, high dynamic range, and low latency. Also, by capturing rapid changes in a scene rather than full images, event-based vision shows surprising resilience to visual noise and distortion.

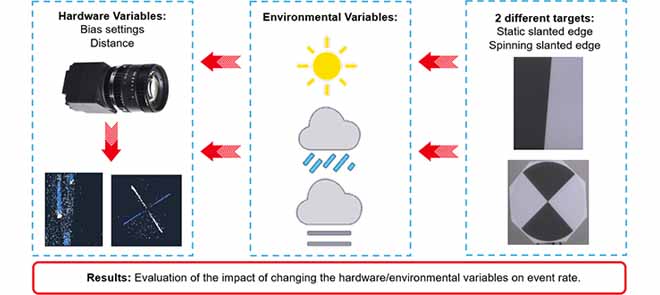

While these characteristics would be beneficial for ADAS, there has been little research into how event cameras are affected by adverse weather, such as rain and fog, and whether there could be any improvements in this area. This study aims to characterize the performance of event cameras across varying rainfall rates, fog levels, and illumination under controlled settings. To evaluate whether this performance could be improved, the event camera was tuned using the bias settings of the sensor to mitigate the impact of rain.

A novel experiment to evaluate event-based cameras, particularly in harsh weather conditions, with a focus on rainfall and fog, was conducted at the AVL Center for Mobility and Sensor Testing. This facility was chosen because it provides the capability to control a physical simulation of various lighting and weather conditions. Testing was performed using a selection of image-quality targets, including a circular slanted-edge target that was spun at a constant frequency. This ensured that the event camera would always have motion (generating light intensity changes) in the scene to detect. The data from the event camera were used to measure the frequency, which was then compared with the actual frequency of the spinning target. From this, it was possible to determine when the spinning target became “visible” in fog conditions and during bias experiments.

The study showed that both rainfall rate and raindrop size distribution contribute to the impact of rain on an event camera.

The fog experiments showed that the spinning target frequency could be detected at a visibility of 60 m, while the event rate reached a maximum at approximately 300 m. This indicates that even when the target was still obscured by fog, the event camera could accurately measure the frequency of the spinning target.

The study showed that adjusting the camera's bias settings could mitigate the effects of rain on the sensor. Specifically, both the contrast sensitivity threshold and low-pass filter bias settings reduced the event rate in rain to the point that the spinning target frequency could be measured correctly.

This work demonstrates that adverse weather conditions can affect event camera performance. However, carefully tuning the bias settings of an event camera can reduce the impact of rain in a scene, while also preserving crucial environmental information.