MS-YOLO: Object Detection Based on YOLOv5 Optimized Fusion Millimeter-Wave Radar and Machine Vision

The advent of sensors and artificial intelligence has facilitated the rapid growth of autonomous vehicles. The environment perception system helps get an accurate understanding of traffic scene semantics and the corresponding behavior decisions of vehicles. The perception module generally uses cameras, millimeter-wave radar, ultrasonic radar, and LiDAR sensors. A single sensor can only obtain partial characteristics of its ambiance. Multiple sensor modalities can significantly enhance performance in autonomous vehicles, but they can lead to conflicting information.

Millimeter-wave radar and machine vision are two distinct sensor technologies with their own unique advantages and limitations. Millimeter-wave radar excels in penetrating capabilities and remains unaffected by lighting conditions. At the same time, machine vision provides high-resolution image data but struggles in adverse weather conditions and low-light scenarios. Integrating these two technologies can compensate for each other's weaknesses and deliver comprehensive object detection capabilities.

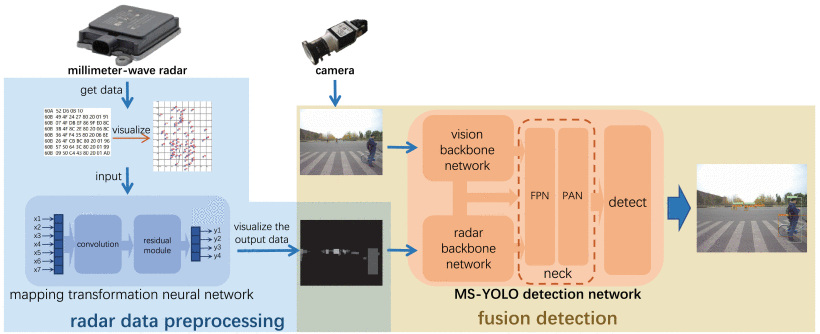

The paper proposes an innovative approach to object detection, a deep learning algorithm for multi-data source fusion, based on the multi-source improvement of the You Only Look Once ver.5 (YOLOv5) network. The MS-YOLO network is improved in two aspects: the dual backbone network and the middle-layer feature guidance structure. The network uses a double backbone structure to extract feature information from millimeter-wave radar and camera data, enhancing detection accuracy through fusion and early feature extraction.

Initially, the millimeter-wave radar data is embedded into the YOLOv5 network, enabling it to comprehend radar echoes and incorporate them into its object detection process. This integration enhances the model's ability to detect objects even when obscured from the visual spectrum, making it particularly useful for scenarios like foggy weather or dust storms.

Furthermore, the machine vision component of MS-YOLO processes high-resolution images to extract detailed object features and fine-grained contextual information. The integration of machine vision augments the model's precision in object classification and ensures accurate localization, even in complex scenes with multiple objects and occlusions.

The fusion of these two complementary sensor modalities is facilitated through a novel optimization strategy where a dynamic weighting mechanism is employed to adaptively balance the influence of millimeter-wave radar and machine vision in real-time. This adaptability ensures that the model optimally combines the strengths of both sensors, leading to improved detection accuracy and robustness across various environmental conditions.

Experimental results showcase the superior performance of MS-YOLO compared to traditional YOLOv5 and other state-of-the-art object detection models. In extensive evaluations, MS-YOLO consistently achieves higher accuracy in detecting objects in challenging scenarios, such as heavy rain, fog, and low-light conditions. Moreover, it excels in scenarios with complex backgrounds and occlusions, making it a promising solution for real-world applications.

MS-YOLO synergistically fuses information from millimeter-wave radar and machine vision to enhance object detection performance. Its adaptability to adverse conditions and ability to detect objects beyond the visual spectrum makes it valuable for autonomous vehicles, intelligent transportation systems, and security surveillance systems. This research opens new avenues for the seamless fusion of multiple sensor modalities, fostering innovation in the fields of computer vision and sensor integration.