Image-Based Force Estimation in Medical Applications: A Review

Robotic solutions have become integral to medical and healthcare systems in recent times. It is being widely used in assistive technologies like hearing aids, communication aids, wheelchairs, spectacles, prostheses, pill organizers, memory aids, etc., for rehabilitation, bionics, telemanipulation for remote assistance, robot-meditated disinfection and as surgical assistance in robotics surgery.

In medical robotics, Robot-assisted minimally invasive surgery (MIS) requires high degrees of perfection since it has to work with soft and hard tissues in constrained spaces inside the human body. The slightest force variation by a surgeon or surgical tool can drastically affect soft, deformable tissues. An efficient force estimation and real-time haptic force feedback to the surgeon during robotic-assisted surgery are required. The haptic feedback helps a surgeon make diagnostic and interventional decisions.

Conventionally, the tip of a surgical instrument has a tiny force or strain sensor mounted on it. Strain gauge, MEMS-based, piezoelectric, optical, or Bragg sensors are highly accurate force sensors. Yet, conveying information about sensed force to a surgeon or operator poses problems. Additionally, these sensors require biocompatible materials, high maintenance, and a strict sterilization regime.

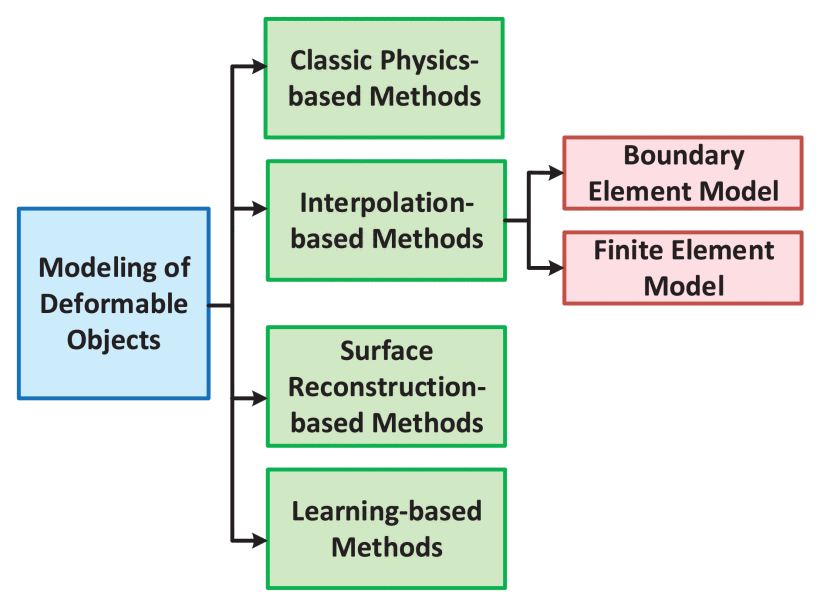

The study analyzes force estimation approaches for robot-based medical intervention, particularly image-based systems. Different approaches to modeling deformations of soft objects include physics-based, interpolation-based, surface reconstruction-based, and learning-based algorithms.

Mass-spring-damper models, mesh-based Finite Element (FE), and Boundary Element (BE) models are methods based on the mechanical properties of soft objects. Identifying the mechanical properties of different soft materials and their efficient inclusion remains challenging.

A novel generation of haptic force feedback systems has emerged. With the proven efficacy of Convolutional Neural Networks (CNNs) in pattern recognition, image-based techniques have become increasingly popular in force measurement and estimation. Haptic information is extracted indirectly by employing artificial intelligence and deep learning algorithms.

Most image-based approaches start by filtering out the noisy components of the images through image preprocessing. A segmentation process further prepares the data for object detection. Edge detection by a Canny detector, with noise filtering and object area determination features, is an efficient solution for segmentation. A feature detection algorithm is employed to find the lines, edges, blobs, and contours within the image that relates to its object. The template matching methods are accurate but computationally expensive. The CNN learns by comparing the image capture of object deformation with the ground truth provided. Typically, the force sensors mounted on medical instruments or robotic manipulators provide the ground truth force data.

The CNN-based method has the advantage of being able to study any deformable or soft object, regardless of its mechanical properties. However, any learning-based system depends on specific datasets to learn and train. These systems have a small dataset as they are still in their early stages. Global data sharing may provide the medical community with a sufficient, tailored dataset in the future.

Incorporating advanced imaging techniques into CNN-based force estimation will improve learning-based force estimation results. Robust analysis and effective ground truth generation can significantly enhance the performance of learning-based estimation systems.