EV-Fusion: A Novel Infrared and Low-Light Color Visible Image Fusion Network Integrating Unsupervised Visible Image Enhancement

The EV-Fusion model presents a novel method for fusing infrared and low-light visible images. It effectively reduces redundancy and addresses the limitations of single-band images that fail to capture scene details.

Infrared images reveal thermal features in low-light conditions but suffer from low resolution and poor texture. In contrast, visible image enhancement provides high-resolution details but faces challenges such as low brightness, reduced contrast, and color distortion.

Current fusion methods rely on traditional algorithms with manual feature extraction or deep learning optimized for daytime. Both are ineffective in low light, and existing enhancement techniques often prioritize intensity over crucial color information, leading to incomplete fusion outcomes.

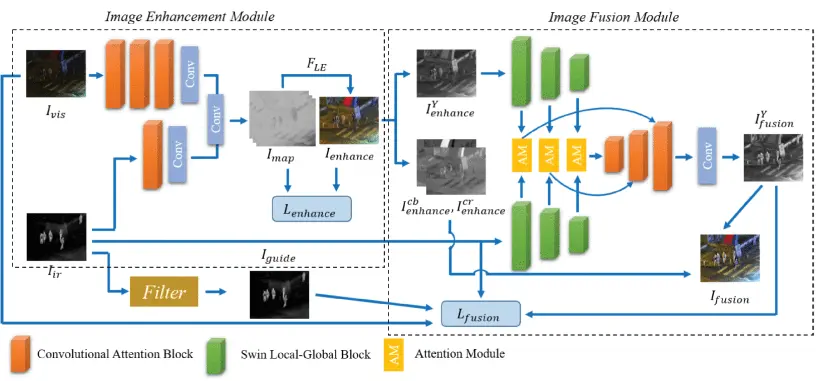

EV-Fusion outperforms two-stage strategies in subjective and objective evaluations by combining infrared and low-light color images to enhance scene detail. The model employs two jointly trained modules: a color visible image enhancement module and an intensity image fusion module.

The color enhancement module uses an unsupervised approach with a light-enhancement curve to improve visibility in low-light conditions, enhancing both intensity and color. It incorporates illumination, color, and smoothness loss functions to boost brightness, maintain natural color, and ensure spatial consistency.

Standard deviation (STD) measures image contrast by reflecting the variation of pixel values from the mean in YCbCr color space. Non-reference loss functions optimize the integration of enhanced visible intensity with infrared images, improving both intensity and color.

The intensity image fusion module utilizes the Swin Local-Global Block (SLGB) to extract local and global features, employing convolutional neural networks and transformer models for better infrared representation while preserving visible details. It also incorporates structure loss and gradient loss, combining them in total loss function to maintain structural features and maximize texture detail.

Final images are generated through multiscale feature reconstruction, blending enhanced color and intensity information. The Swin architecture focuses on critical infrared regions through a bilateral-guided salience map, highlighting important areas and reducing irrelevant background textures.

Quantitative evaluations show that EV-Fusion consistently outperforms nine state-of-the-art methods, including autoencoder-based, generative adversarial network-based, and convolutional neural network-based approaches.

Extensive experiments on the LLVIP and MSRS datasets, using two NVIDIA GeForce RTX 3090 GPUs, demonstrate high scores across several quantitative metrics, including visual information fidelity, average gradient, and entropy. It also has enhanced spatial frequency, natural image quality, and color quality enhancement.

Ablation studies indicate that the bilateral-guided salience map improves the fusion process by highlighting infrared target regions and balancing infrared and visible features. The significance of the loss functions to improve illumination, color, and structure loss is evident as they work together to optimize training and enhance overall fusion performance.

Overall, EV-Fusion marks a significant advancement in infrared and low-light image fusion technology. It effectively addresses existing limitations and delivers superior visual outcomes. Despite its complexity compared to other methods, it shows strong results and computational efficiency, making it a promising option for night-time applications.