Classification Strategies for Radar-Based Continuous Human Activity Recognition With Multiple Inputs and Multilabel Output

Accidental falls are a major concern for independent living among older or sick people. Various sensors are available for fall detection, but wearable sensors are popular. However, they have limitations as they must be worn constantly, which may not be feasible for many people.

Cameras are contactless sensors and are also a preferred choice. However, they are critical concerning privacy and need strategic installations for continuous monitoring.

Radar is also a contactless sensor for remote sensing but has fewer privacy concerns as radar data are typically less interpretable to humans. Hence, radar is an interesting alternative for fall detection and recognition of other daily activities.

The researchers here investigate radar-based continuous human activity recognition for fall detection. Continuous means that activities like walking, standing, sitting down, bending, and falling can occur in a continuous time stream. The system could recognize and monitor these activities, replicating a close-to-realistic scenario.

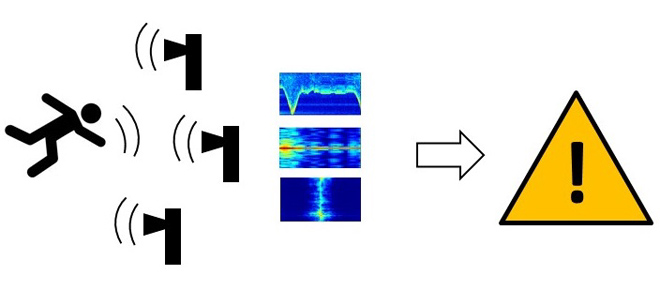

In their previous works, the researchers introduced a multi-label classification approach for continuous human activity recognition. This approach first segments the continuous time stream into shorter segments of, e.g., 30 seconds, then computes range-time-map, range-Doppler-map, and Doppler-time-map (spectrogram) of this segment and subsequently performs the classification based on these three inputs.

This work extends this approach and investigates various fusion strategies, two different neural networks for the classification, and the effect of different threshold values for the network decision. It mainly investigates signal-level fusion vs. decision-level fusion of the three radar data representations range-time, range-Doppler, and spectrogram, i.e., stacking the data and inputting this into a neural network vs. using three individual classifiers and averaging the results. Regarding the networks, the study compares a relatively simple CNN(convolutional neural network) with three convolutional stages to a ResNet 18.

The radar data used is from the publicly available dataset. The measurement setup consisted of five radars. Accordingly, another question to investigate is if or how to fuse the data from these five radars. The study investigated signal-level fusion compared to no fusion.

The main findings of the aforementioned parameter study are the following:

- ResNet 18 clearly outperformed the shallower CNN.

- All fusion methods performed similarly well when using ResNet18 as a classifier.

- Regarding fusion strategies, fusing the radars’ data and the data representations at the signal level might be most beneficial.

- The configuration yielded the highest overall classification accuracy and best performance for fall detection using the area under curve (AUC) metric.

- For the output thresholds, a value of 0.2 seems to be a good compromise between a high fall detection rate and a low false alarm rate.

- Combining the aforementioned signal fusion from five radars with the best parameters yielded an overall accuracy for 10-class activity recognition of 94.98%, a fall detection rate of 82.39%, and a false alarm rate of 4.4% for the fall class.

To conclude, the results highlight the effectiveness of multimodal radar data for precise and real-time recognition of activities in a smart environment. However, future research should focus on improving fall detection performance to make it better.